The Dark Side of AI: Pentagon Explosion Hoax Causes Temporary Stock Sell-Off and Raises Concerns

A remarkable demonstration of artificial intelligence (AI) has unfolded, showcasing its power in both positive and negative ways.

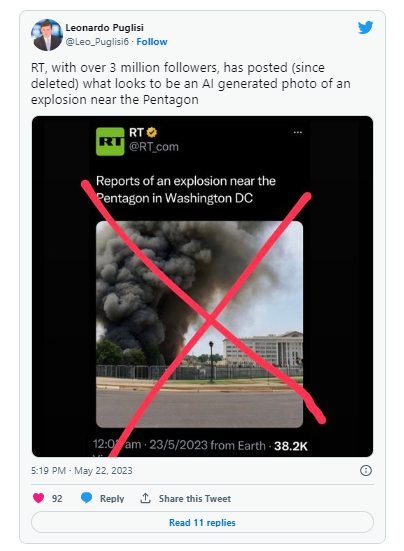

An AI-generated image depicting a fabricated explosion at the Pentagon surfaced and rapidly spread across social media platforms.

This alarming picture, featuring smoke emerging from the iconic building, was shared by multiple accounts, including a Russian state-owned media channel.

What made this incident even more intriguing was the fact that the false Pentagon explosion found its way onto unofficial Twitter accounts that sported blue verification checkmarks.

This further exacerbated the confusion and magnified the impact of the falsehood, shedding light on the significance of stringent source verification.

It also shed light on the unsurprising outcome of Elon Musk’s revised criteria for account verification.

As the viral image gained traction, the U.S. stock market experienced a momentary decline, though it swiftly rebounded once the photo was exposed as a hoax.

Bitcoin, the leading cryptocurrency, also endured a brief “flash crash” as the fake news circulated, causing its value to momentarily drop to $26,500. However, Bitcoin is gradually recovering and is presently being traded at $26,882, as reported by CoinGecko.

The impact of the hoax was significant enough to trigger the intervention of the Arlington County Fire Department, who felt compelled to address the situation.

Through a tweet, they clarified that there was no explosion or incident occurring at or near the Pentagon reservation, reassuring the public that there was no immediate danger or risk.

Instances of such deceptive practices have raised serious concerns among critics who question the unregulated development of AI.

Experts in the field have repeatedly cautioned against the potential misuse of advanced AI systems by malevolent actors, who could exploit them to propagate misinformation and create online chaos.

This incident is not the first of its kind. Viral AI-generated images have previously misled the public, including pictures of Pope Francis wearing a Balenciaga jacket, fake arrests of President Donald Trump, and deep-fake videos featuring celebrities like Elon Musk or SBF promoting crypto scams.

Renowned figures have expressed their alarm over the proliferation of disinformation.

In fact, hundreds of tech experts have called for a temporary halt of six months on the progress of advanced AI development until adequate safety guidelines are established. Even Dr. Geoffrey Hinton, widely regarded as the ‘Godfather of AI,’ resigned from his position at Google to voice his concerns regarding potential AI risks while safeguarding his former employer’s reputation.

Episodes of misinformation like the one witnessed above contribute to the ongoing debate surrounding the necessity for regulatory and ethical frameworks for AI. As AI continues to gain power as a tool in the hands of those spreading disinformation, the consequences can be tumultuous. For this reason, regulating AI is not only crucial, but extremely necessary at this point, as it can lead to disasters.